Authors

Updated

18 May 2026Form Number

LP1651PDF size

20 pages, 848 KBAbstract

The Lenovo EveryScale HPC & AI Software Stack combines open-source with proprietary best-of-breed supercomputing software to provide the most consumable open-source HPC software stack embraced by all Lenovo HPC customers.

This product guide provides essential pre-sales information to understand the key features and components of the EveryScale HPC & AI Software Stack. The product guide is intended for technical specialists, sales specialists, sales engineers, IT architects, and other IT professionals who want to learn more about the Lenovo EveryScale HPC & AI Software Stack.

Change History

Changes in the May 18, 2026 update:

- Refreshed information on seller training courses

Introduction

The Lenovo EveryScale HPC & AI Software Stack combines open-source with proprietary best-of-breed Supercomputing software to provide the most consumable open-source HPC software stack embraced by all Lenovo HPC customers.

It provides a fully tested and supported, complete but customizable HPC software stack to enable the administrators and users in optimally and environmentally sustainable utilizing their Lenovo Supercomputers.

The software stack is built on the most widely adopted and maintained HPC community software for orchestration and management. It integrates third party components especially around programming environments and performance optimization to complement and enhance the capabilities, creating the organic umbrella in software and service to add value for our customers.

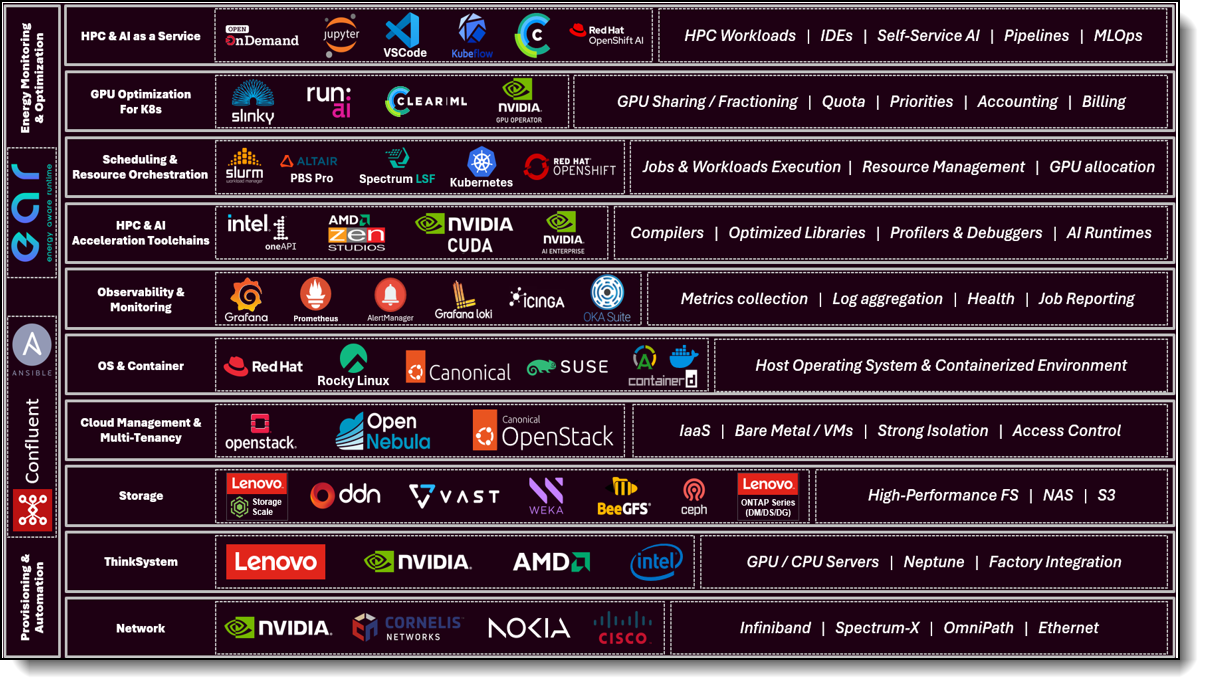

The software stack offers key software and support components for orchestration and management, programming environments and services and support, as shown in the following figure.

Did you know?

Lenovo EveryScale HPC & AI Software Stack is a modular software stack tailored to our customer's needs. Thoroughly tested, supported and periodically updated, it combines the latest open-source HPC software releases to enable organizations with an agile and scalable IT infrastructure.

Benefits

The Lenovo EveryScale HPC & AI Software Stack provides the following benefits to customers.

Overcoming the Complexity of HPC Software

An HPC system software stack consists of dozens of components, that administrators must integrate and validate before an organization’s HPC applications can run on top of the stack. Ensuring stable, reliable versions of all stack components is an enormous task due to the numerous interdependencies. This task is very time consuming because of the constant release cycles and updates of individual components.

The Lenovo EveryScale HPC & AI Software Stack is fully tested, supported and periodically updated to combine the latest open-source HPC software releases, enabling organizations with an agile and scalable IT infrastructure.

Benefits of the Open-source Model

Going forward, in IDC's opinion, the development model exemplified by Linux is more workable. In this model, stack development is driven primarily by the open-source community and vendors offer supported distributions with additional capabilities for customers that require and are willing to pay for them. As the Linux initiative demonstrates, a community-based model like this has major advantages for enabling software to keep pace with requirements for HPC computing and storage hardware systems.

This model delivers new capabilities faster to users and makes HPC systems more productive and higher returning investments.

A fair number of foundational open source HPC software components already exist (e.g., Open MPI, Rocky Linux, Slurm, OpenStack, and others). Many HPC community members are already taking advantage of these.

Customers will benefit from the HPC community, as the community works to integrate a multitude of components that are commonly used in HPC systems and are freely available for open source distribution.

The key open-source components of the software stack are:

EAR is a powerful European open-source energy management suite supporting anything from monitoring over power capping to live-optimization during the application runtime. Lenovo is collaborating with Barcelona Supercomputing Centre (BSC) and EAS4DC on the continuous development and support and offers three versions with differentiating capabilities.

EAR is a powerful European open-source energy management suite supporting anything from monitoring over power capping to live-optimization during the application runtime. Lenovo is collaborating with Barcelona Supercomputing Centre (BSC) and EAS4DC on the continuous development and support and offers three versions with differentiating capabilities

- Confluent Management

Confluent is Lenovo-developed open-source software designed to discover, provision, and manage HPC clusters and the nodes that comprise them. Confluent provides powerful tooling to deploy and update software and firmware to multiple nodes simultaneously, with simple and readable modern software syntax.

- Slurm Orchestration

Slurm is integrated as an open source, flexible, and modern choice to manage complex workloads for faster processing and optimal utilization of the large-scale and specialized high-performance and AI resource capabilities needed per workload provided by Lenovo systems. Lenovo provides support in partnership with SchedMD.

- Energy Aware Runtime

EAR is a powerful European open-source energy management suite supporting anything from monitoring over power capping to live-optimization during the application runtime. Lenovo is collaborating with Barcelona Supercomputing Centre (BSC) and EAS4DC on the continuous development and support and offers three versions with differentiating capabilities.

-

Open OnDemand

Open OnDemand (OOD) is an open source, web-based access portal that provides a unified Graphical User Interface (GUI) for seamless access to HPC and AI clusters. It enables users to securely access compute resources, submit and monitor jobs, and launch rich interactive applications, such as Jupyter Notebooks, VS Code, RStudio, and TensorBoard, directly from a standard web browser. Through these interactive environments, OOD enables end-users to run complete AI and data-science workflows based on popular frameworks like TensorFlow, PyTorch, RAPIDS, and more, without needing to manage the underlying cluster complexity. Supporting multiple resource managers, including Slurm, PBS Pro, LSF, and Kubernetes, Open OnDemand makes it easy to consolidate HPC and AI workloads on a shared infrastructure, significantly improving usability, accessibility, and overall productivity while eliminating the need for command-line expertise.

Orchestration and management

The following orchestration and management software is available with Lenovo EveryScale HPC & AI Software Stack:

- Confluent (Best Recipe interoperability)

Confluent is Lenovo-developed open source software designed to discover, provision, and manage HPC clusters and the nodes that comprise them. Confluent provides powerful tooling to deploy and update software and firmware to multiple nodes simultaneously, with simple and readable modern software syntax. Additionally, Confluent’s performance scales seamlessly from small workstation clusters to thousand-plus node supercomputers. For more information, see the Confluent documentation and the Lenovo Confluent Management Software paper.

-

Slurm

Slurm is a modern, open-source scheduler designed specifically to satisfy the demanding needs of high-performance computing (HPC), high throughput computing (HTC) and AI. Slurm is developed and maintained by SchedMD®. Slurm maximizes workload throughput, scale, reliability, and results in the fastest possible time while optimizing resource utilization and meeting organizational priorities. Slurm automates job scheduling to help admin and users manage the complexities of on-prem, hybrid, or cloud workspaces. Slurm workload manager executes faster and is more reliable ensuring increased productivity while decreasing costs. Slurm’s modern, plug-in-based architecture runs on a RESTful API supporting both large and small HPC, HTC, and AI environments. Allow your teams to focus on their work while Slurm manages their workloads.

- SchedMD Slurm Support service capabilities for Lenovo HPC and AI systems include:

- Level 3 Support: High-performance systems must perform at high utilization and performance to meet end users and management return on the investment expectations. Customers covered by a support contract can reach out to SchedMD engineer experts to promptly resolve complex workload management issues and receive answers back to complex config questions quickly, instead of taking weeks or even months to try to resolve them in-house.

- Remote Consulting: Valuable assistance and implementation expertise that speeds custom configuration tuning to increase throughput and utilization efficiency on complex and large-scale systems. Customers can review cluster requirements, operating environment, and organizational goals directly with a Slurm engineer to optimize the configuration and meet organizational needs.

- Tailored Slurm Training: Tailored Slurm expert training that empowers users on harnessing Slurm capabilities to speed projects and increase technology adoption. A customer scoping call before the onsite Instruction ensures coverage of specific use cases addressing organization needs. An in-depth and comprehensive technical training is delivered in a hands-on lab workshop format to help users feel empowered on Slurm best practices in their site-specific use cases and configuration.

- NVIDIA Unified Fabric Manager (UFM) (ISV supported)

NVIDIA Unified Fabric Manager (UFM) is InfiniBand networking management software that combines enhanced, real-time network telemetry with fabric visibility and control to support scale-out InfiniBand data centers. For more information, see the NVIDIA UFM product page.

The two UFM offerings available from Lenovo are as follows:

- UFM Telemetry for Real-Time Monitoring

The UFM Telemetry platform provides network validation tools to monitor network performance and conditions, capturing and streaming rich real-time network telemetry information, application workload usage, and system configuration to an on-premises or cloud-based database for further analysis.

- UFM Enterprise for Fabric Visibility and Control

The UFM Enterprise platform combines the benefits of UFM Telemetry with enhanced network monitoring and management. It performs automated network discovery and provisioning, traffic monitoring, and congestion discovery. It also enables job schedule provisioning and integrates with industry-leading job schedulers and cloud and cluster managers, including Slurm and Platform Load Sharing Facility (LSF).

- UFM Telemetry for Real-Time Monitoring

The following table lists all Orchestration and Management software available with Lenovo EveryScale HPC & AI Software Stack.

* SchedMD Slurm Onsite or Remote 3-day Training: in-depth and comprehensive site-specific technical training. Can only be added to a support purchase.

** SchedMD Slurm Consulting w/Sr.Engineer 2REMOTE Sessions (Up to 8 hrs): review initial Slurm setup, in-depth technical chats around specific Slurm topics & review site config for optimization & best practices. Required with support purchase, cannot be purchased separately.

Note: SchedMD Slurm Consulting w/Sr.Engineer 2REMOTE Sessions option must be selected and locked in for every SchedMD support selection.

SchedMD Slurm Onsite or Remote 3-day Training option must be selected and locked in for every SchedMD Commercial support selection. Optional for EDU & Government support selections.

Programming environment

Programming environment

The following programming software is available with Lenovo EveryScale HPC & AI Software Stack.

- NVIDIA CUDA

NVIDIA CUDA is a parallel computing platform and programming model for general computing on graphical processing units (GPUs). With CUDA, developers are able to dramatically speed up computing applications by harnessing the power of GPUs. When using CUDA, developers program in popular languages such as C, C++, Fortran, Python and MATLAB and express parallelism through extensions in the form of a few basic keywords. For more information, see the NVIDIA CUDA Zone.

- NVIDIA HPC Software Development Kit

The NVIDIA HPC SDK C, C++, and Fortran compilers support GPU acceleration of HPC modeling and simulation applications with standard C++ and Fortran, OpenACC directives, and CUDA. GPU-accelerated math libraries maximize performance on common HPC algorithms, and optimized communications libraries enable standards-based multi-GPU and scalable systems programming. Performance profiling and debugging tools simplify porting and optimization of HPC applications, and containerization tools enable easy deployment on-premises or in the cloud. For more information, see the NVIDIA HPC SDK.

- Intel oneAPI

The Intel oneAPI Base & HPC Toolkit is a comprehensive software development suite designed to empower developers in creating HPC & AI solutions that exploit the full potential of modern hardware architectures. This toolkit encompasses an array of advanced tools, libraries, and compilers, enabling programmers to efficiently design, optimize, and deploy parallel applications across diverse computing platforms, including CPUs, GPUs, and FPGAs. With a focus on fostering code portability and performance scalability, the Intel oneAPI Base & HPC Toolkit equips developers with the means to enhance productivity, streamline software development, and achieve exceptional performance outcomes in the realm of high-performance computing.- For more information, see Intel® oneAPI Base and HPC Toolkit

- System-based pricing (introduced in mid-2023) can range from a small system (64-256 nodes), medium system (257-512 nodes), or large system (512+ nodes)

- Developer-based pricing is for systems with fewer than 64 nodes and offers support for a limited number of users.

- Part numbers are available for Commercial or Academic customers.

- Support Renewals are available.

- Commercial parts have different part numbers if they are quoted with or without Intel hardware.

The following tables list the relevant ordering part numbers.

AI: application workload and advanced GPU orchestration

The following software suite is available with Lenovo EveryScale HPC & AI Software Stack.

- NVIDIA AI Enterprise

NVAIE is an end-to-end, cloud-native suite of AI and data analytics software, optimized, certified, and supported by NVIDIA to run on VMware vSphere and bare-metal with NVIDIA-Certified Systems™. It includes key enabling technologies from NVIDIA for rapid deployment, management, and scaling of AI workloads in the modern hybrid cloud. NVAIE is licensed on a per-GPU basis and can be purchased as either a perpetual license with support services, or as an annual or multi-year subscription.- The perpetual license provides the right to use the NVIDIA AI Enterprise software indefinitely, with no expiration. NVIDIA AI Enterprise with perpetual licenses must be purchased in conjunction with one-year, three-year, or five-year support services. A one-year support service is also available for renewals.

- The subscription offerings are an affordable option to allow IT departments to better manage the flexibility of license volumes. NVIDIA AI Enterprise software products with subscription includes support services for the duration of the software’s subscription license.

The features of NVAIE Software are listed in the following table.

Note: Maximum 10 concurrent VMs per product license.

- NVIDIA Run: ai

NVIDIA Run: ai is an enterprise platform for AI workloads and GPU orchestration, accelerating AI operations with dynamic orchestration across the AI life cycle, maximizing GPU efficiency, and integrating seamlessly into hybrid AI infrastructure. The platform provides features such as AI-native workload orchestration, unified AI infrastructure management, flexible AI deployment, and open architecture, supporting public clouds, private clouds, hybrid environments, and on-premises data centers. For more information, see the NVIDIA Run:ai product page.

For more information, see the NVIDIA Run:ai product page:

https://www.nvidia.com/en-us/software/run-ai/

- NVIDIA Mission Control

NVIDIA Mission Control is a software platform that powers AI factory operations, bringing instant agility to inference and training workloads, and full-stack intelligence for world-class infrastructure resiliency. It simplifies AI operations, from cluster deployment to workload orchestration, providing benefits such as instant agility, hyperscale-grade efficiency, and gold-standard infrastructure resiliency. The platform includes features like seamless workload orchestration, energy-optimized power profiles, and autonomous job recovery.

For more information, see the NVIDIA Mission Control product page:

https://www.nvidia.com/en-us/data-center/mission-control/

- ClearML: Innovating AI at Scale with Lenovo

As a Lenovo innovator in the AI software stack, ClearML delivers an enterprise-grade AI and GenAI platform that accelerates the entire AI lifecycle. Designed for flexibility and performance, ClearML provides a cloud-like experience with the efficiency and security of Lenovo’s infrastructure solutions.

ClearML’s architecture is built on three layers – Infrastructure Control Plane, AI Development Center, and GenAI App Engine – each enabling Lenovo customers to unlock the full potential of AI:

- Infrastructure Control Plane – ClearML enables GPU optimization and management across heterogeneous environments with dynamic resource allocation for NVIDIA and AMD GPUs, granular quota management with per-tenant billing, and isolated multi-tenancy. The platform supports silicon-agnostic workload scheduling across any environment, enables hybrid multi-cluster orchestration with cloud bursting, and deploys flexibly across on-premise or air-gapped infrastructure.

- Dynamic Fractional GPUs – ClearML enables highly efficient use of GPU resources by allowing granular allocation and sharing of GPU fractions. This dynamic approach optimizes performance for a wide range of AI workloads, supporting both NVIDIA MIG-capable and non-MIG devices and AMD MI300X and newer GPUs. It allows organizations to maximize their hardware investment and adapt resource allocation in real time as workload demands change.

- Multi-tenant, Multi-cluster – The platform supports secure, dynamic resource sharing across multiple users and clusters. Its secure multi-tenancy model includes robust network isolation, ensuring privacy and compliance for different teams or projects. Resources are allocated flexibly based on real-time demand, and new tenants can be onboarded quickly without the need for dedicated clusters, maximizing overall resource utilization.

- AI Development Center – The platform offers a comprehensive workbench for AI/ML development, integrating tools for data management, experiment tracking, and pipeline orchestration. Users can launch familiar development environments like Jupyter and VSCode with a single-click, manage datasets, and automate complex AI workflows—all from a unified interface. This abstraction allows data scientists and engineers to focus on their code and experiments, minimizing the need to interact directly with Kubernetes or underlying infrastructure.

- GenAI App Engine – ClearML simplifies the testing, deployment, and management of large language models (LLMs). With one-click access, users can deploy custom or off-the-shelf (Hugging Face) models, NVIDIA NIM containers, or custom applications with pre-configured networking and RBAC authentication. An endpoint dashboard provides teams with full visibility on usage and performance, and static routing provides horizontal scalability for peak periods. The GenAI App Engine supports secure deployment of both open-source and custom models.

- NVIDIA AI Enterprise integration – ClearML integrates with NVIDIA AI Enterprise (NVAIE), including dynamic license allocation that allows organizations to optimize costs and scale efficiently. The platform orchestrates NVIDIA-optimized containers (such as NIM and Triton), accelerating AI development and providing observability for inference workloads.

Lenovo and ClearML together deliver a secure, scalable, and innovative AI platform – empowering organizations to accelerate innovation and turn vision into production-grade AI solutions.

For more information: https://clear.ml/

The following tables list the ordering part numbers.

Note: SKUs marked ‘INC’ are part of NVIDIA’s Inception Program, a global initiative supporting AI startups.

Learn more at: https://www.nvidia.com/en-us/startups/

Note: SKUs marked ‘INC’ are part of NVIDIA’s Inception Program, a global initiative supporting AI startups.

Learn more at: https://www.nvidia.com/en-us/startups/

Energy monitoring and optimization

The following Energy monitoring and optimization software is available with Lenovo EveryScale HPC & AI Software Stack.

- Energy Aware Runtime (EAR)

EAR is licensed under EPL 2.0 and developed by BSC and Energy Aware Solutions (EAS). While the EAR core version remains open-source, EAR also features extensions developed by the EAS team and provided under a proprietary license. EAR packs are the different solutions provided by EAS, which include the EAR core, EAR extensions, and EAS installation and support services. EAR packs can be purchased from Lenovo under the EveryScale HPC & AI Software Stack CTO and are delivered through EAS. - EAS offers three EAR packs:

- EAR Detective Pro – Includes Data Center monitoring, Analysis and Accounting modules.

- EAR Optimizer – Includes all features of EAR Detective Pro, with the addition of the energy optimization module for both CPU and GPU.

- EAR Optimizer Pro – Build upon EAR Optimizer by introducing the smart power capping module.

EAR is compatible with Slurm, PBS Pro and Kubernetes clusters.

The following table lists the ordering part numbers for EAR Licensing, Support and Services

Note: These part numbers are applicable only to clusters that meet all of the following criteria:

- Contain a single CPU type and a single GPU type

- Have a combined total of up to 1,000 sockets (including both CPU and GPU sockets)

- Do not require smart power capping (i.e. do not use EAR Optimizer Pro)

Special BID Requirement: A Special BID from EAS is required in the following scenarios:

- The cluster exceeds 1,000 sockets

- The cluster includes multiple CPU or GPU types

- The deployment requires smart power capping, available only through EAR Optimizer Pro

Resources

For more information, see these resources:

- Implementing Lenovo Confluent Management Software (Planning / Implementation):

https://lenovopress.lenovo.com/lp2266-implementing-lenovo-confluent-management-software - Lenovo DSCS configurator:

https://dcsc.lenovo.com - Optimizing Power and Energy in HPC data centers with Energy Aware Runtime

https://lenovopress.lenovo.com/lp1646 - Energy Aware Runtime software and documentation:

http://www.eas4dc.com - Lenovo Confluent documentation:

https://hpc.lenovo.com/users/documentation/ - Lenovo Compute Orchestration in HPC Data Centers with Slurm - Solution Brief:

https://lenovopress.lenovo.com/lp1701-lenovo-compute-orchestration-in-hpc-data-centers-with-slurm

Trademarks

Lenovo and the Lenovo logo are trademarks or registered trademarks of Lenovo in the United States, other countries, or both. A current list of Lenovo trademarks is available on the Web at https://www.lenovo.com/us/en/legal/copytrade/.

The following terms are trademarks of Lenovo in the United States, other countries, or both:

Lenovo®

The following terms are trademarks of other companies:

AMD is a trademark of Advanced Micro Devices, Inc.

Intel®, the Intel logo is a trademark of Intel Corporation or its subsidiaries.

Linux® is the trademark of Linus Torvalds in the U.S. and other countries.

Windows® is a trademark of Microsoft Corporation in the United States, other countries, or both.

LSF® is a trademark of IBM in the United States, other countries, or both.

Other company, product, or service names may be trademarks or service marks of others.

Configure and Buy

Full Change History

Changes in the April 30, 2026 update:

- Replaced image under - Introduction section

- Updates under - Orchestration and management section

- Removed LiCO parts from the stack and added a new paragraph for Open OnDemand

- Removed LiCO reference in the Confluent and Slurm text

- Removed LiCO P/Ns (table 2)

- Updates under - AI application workload and advanced gpu orchestration section

- Added NVIDIA Mission Control description

- Added ClearML description

Changes in the December 8, 2025 update:

- Replaced image under - Introduction section

Changes in the July 31, 2025 update:

- Replaced image under - Introduction section

- The following new subsections have been added under - Software components section

- SchedMD Slurm Support service

- AI: application workload and advanced GPU orchestration

- Energy monitoring and optimization

Changes in the April 2, 2024 update:

- The LiCO Kubernetes K8S version part numbers have been withdrawn from marketing - Orchestration and management section

Changes in the Februart 21, 2024 update:

- The following have been reactivated under - Software components section

- Lenovo Confluent 1 Year Support per managed node, 7S090039WW

- Lenovo Confluent 3 Year Support per managed node, 7S09003AWW

- Lenovo Confluent 5 Year Support per managed node, 7S09003BWW

- Lenovo Confluent 1 Extension Year Support per managed node, 7S09003CWW

Changes in the February 20, 2024 update:

- Updates to "Intel oneAPI options" under - Support components section

- Added the following new - Seller training courses section

Changes in the December 5, 2023 update:

- Withdrawn the following "Lenovo Confluent support" under - Software components section

- Lenovo Confluent 1 Year Support per managed node, 7S090039WW

- Lenovo Confluent 3 Year Support per managed node, 7S09003AWW

- Lenovo Confluent 5 Year Support per managed node, 7S09003BWW

- Lenovo Confluent 1 Extension Year Support per managed node, 7S09003CWW

Changes in the October 11, 2023 update:

- Updates to the Software components section:

- Part number updates - NVIDIA UFM Telemetry

Changes in the September 19, 2023 update:

- Updates to the Support Components section:

- Added additional details to - EAS Service and Support for EAR - feature

- Added additional options to - Intel oneAPI options - table

Changes in the August 14, 2023 update:

- Added new label to the title and multiple components - EveryScale - to align with new rebranding

- Updates to Software components section:

- Intel oneAPI Base & HPC Toolkit (Multi-Node) Support

- LiCO customization service

Changes in the January 9, 2023 update:

- Added new SKUs in the Software components section:

- Lenovo Confluent support

- NVIDIA CUDA

- NVIDIA HPC SDK

- EAS Service and Support for EAR

First published: November 10, 2022

Course Detail

Employees Only Content

The content in this document with a is only visible to employees who are logged in. Logon using your Lenovo ITcode and password via Lenovo single-signon (SSO).

The author of the document has determined that this content is classified as Lenovo Internal and should not be normally be made available to people who are not employees or contractors. This includes partners, customers, and competitors. The reasons may vary and you should reach out to the authors of the document for clarification, if needed. Be cautious about sharing this content with others as it may contain sensitive information.

Any visitor to the Lenovo Press web site who is not logged on will not be able to see this employee-only content. This content is excluded from search engine indexes and will not appear in any search results.

For all users, including logged-in employees, this employee-only content does not appear in the PDF version of this document.

This functionality is cookie based. The web site will normally remember your login state between browser sessions, however, if you clear cookies at the end of a session or work in an Incognito/Private browser window, then you will need to log in each time.

If you have any questions about this feature of the Lenovo Press web, please email David Watts at dwatts@lenovo.com.