Published

27 Mar 2026Form Number

LP2409PDF size

5 pages, 292 KBAbstract

This article explores how enterprises are transitioning from AI experimentation to production-scale “AI factories”, highlighting the critical role of trust in deploying AI with sensitive data. It examines the challenges across data owners, model providers, and infrastructure operators, and introduces Confidential Computing as a key enabler of secure AI execution. The article also showcases Lenovo’s hybrid AI infrastructure and validated architectures designed to deliver scalable, high-performance, and secure deployments.

By combining Confidential Computing with enterprise-grade platforms, organizations can unlock AI value while ensuring data privacy, governance, and compliance in real-world environments.

Introduction

At NVIDIA GTC, the AI industry continues to demonstrate how artificial intelligence is moving rapidly from experimentation to production. Enterprises are no longer simply testing models, they are building AI factories capable of producing intelligence on a scale. However, a fundamental challenge remains: Trust.

Most enterprise data that organizations want to use with AI does not reside in the public cloud. It exists across on-premises systems, data centers, and legacy repositories, often containing highly sensitive information such as healthcare records, financial data, proprietary research, and intellectual property. As a result, organizations must balance innovation with strict requirements around data privacy, governance, and security.

This is where Confidential Computing and secure AI infrastructure become essential. The Trust Challenge in Enterprise AI

As enterprises deploy frontier models and agentic AI workloads, a complex trust dilemma emerges between three key stakeholders:

- Model providers who need to protect proprietary model weights and algorithms

- Infrastructure providers responsible for operating the compute platforms

- Data owners who must ensure sensitive enterprise data remains protected

Traditional computing environments leave data in use unencrypted, meaning sensitive information and model intellectual property can potentially be exposed during execution.

Confidential Computing addresses this challenge by enabling workloads to run inside hardware trusted execution environments (TEEs), where data and models remain cryptographically protected even while being processed. This approach allows enterprises to deploy AI with strong assurances that sensitive information and data remain secure.

From AI Experiments to Enterprise AI Factories

As organizations move toward production AI systems, infrastructure requirements expand significantly. AI factories must support:

- Massive volumes of unstructured enterprise data

- High-performance GPU compute

- Scalable AI pipelines for training and inference

- Secure deployment of frontier models

- Consistent governance and data sovereignty

To support this transformation, enterprises need validated architectures that combine performance, scalability, and security. Lenovo’s AI infrastructure portfolio is designed specifically to enable these enterprise AI factories.

Lenovo Hybrid AI Platforms for Enterprise Deployment

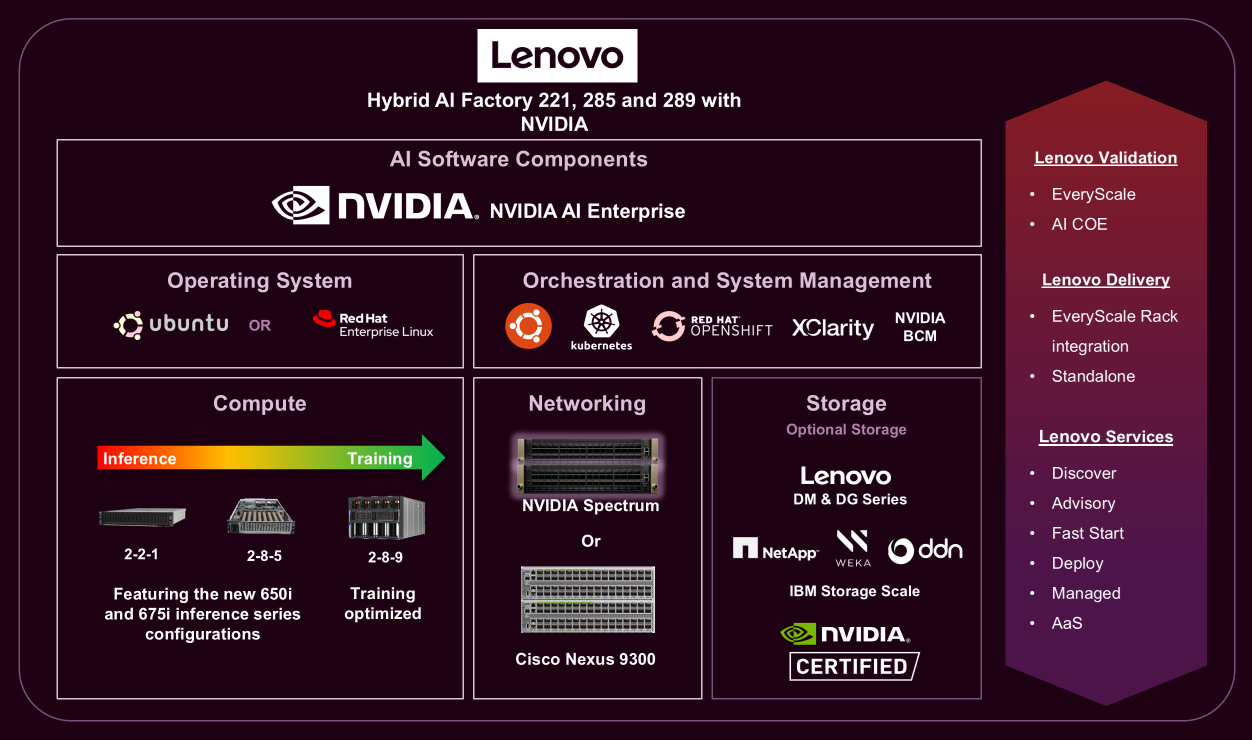

One example is the Lenovo Hybrid AI 285 platform, designed to accelerate enterprise AI deployments across hybrid environments. The platform leverages Lenovo ThinkSystem GPU-rich servers, supporting NVIDIA GPUs and the NVIDIA AI Enterprise software to power demanding AI workloads such as large-language-model inference, fine-tuning, and enterprise retrieval-augmented generation (RAG) pipelines.

With a 2-CPU, 8-GPU architecture and high-speed networking, the platform enables organizations to scale from small deployments to large AI clusters while maintaining high performance and operational efficiency.

These validated configurations reduce deployment complexity and help organizations accelerate time-to-value for production AI initiatives.

Unlocking Enterprise Data with AI Data Platforms

Enterprise AI systems depend not only on compute performance but also on the ability to access and operationalize vast amounts of enterprise data.

Lenovo’s Validated Design for High-Performance AI Data Platforms provides a scalable architecture that enables organizations to securely manage large volumes of unstructured data, including documents, images, audio, and video, while supporting modern AI workflows such as embedding generation and retrieval-augmented generation.

The design integrates high-performance object storage with AI data services to deliver:

- High-throughput data ingestion

- Low-latency retrieval for inference pipelines

- Scalable storage architectures optimized for GPU workloads

- Enterprise-grade security and governance

These capabilities ensure that AI systems can access the data they need while maintaining full control over data sovereignty and compliance requirements.

Securing the Next Generation of AI Infrastructure

As AI continues to transform industries, organizations must build platforms that combine performance, scalability, and trust.

By integrating confidential computing technologies with validated AI infrastructure, enterprises can deploy AI workloads that:

- Protect proprietary model intellectual property

- Safeguard sensitive enterprise data

- Enable secure multi-tenant AI environments

- Support production-scale AI factories

Together, innovations in confidential computing and Lenovo’s hybrid AI infrastructure are helping enterprises unlock the full potential of AI, while maintaining the security and governance required for real-world deployments.

Learn more about Lenovo AI infrastructure and hybrid AI factory solutions at NVIDIA GTC.

Visit www.lenovo.com/hybridai to learn more.

Authors

Carlos Huescas is the Worldwide Product Manager for NVIDIA software at Lenovo. He specializes in High Performance Computing and AI solutions. He has more than 15 years of experience as an IT architect and in product management positions across several high-tech companies.

Pierce Beary is the AI Solutions Product Manager focused on datacenter solutions, he previously worked on the WW HPC team as a product manager for Lenovo EveryScale (LeSI). Prior to joining Lenovo in 2021, Pierce held positions at Exxon Mobil, where he worked as a Mechanical Engineer and Engineering Project Manager. Pierce has a Bachelor’s degree in Mechanical Engineering from North Carolina State University.

Farah Toosi is a Software Product Manager for NVIDIA enterprise software in Lenovo’s Infrastructure Solutions Group. She specializes in AI/ML software infrastructure, GPU orchestration, and enterprise AI platform integrations. She has 8+ years of experience across software products and program management on several high tech companies

Trademarks

Lenovo and the Lenovo logo are trademarks or registered trademarks of Lenovo in the United States, other countries, or both. A current list of Lenovo trademarks is available on the Web at https://www.lenovo.com/us/en/legal/copytrade/.

The following terms are trademarks of Lenovo in the United States, other countries, or both:

Lenovo®

ThinkSystem®

Other company, product, or service names may be trademarks or service marks of others.

Configure and Buy

Full Change History

Course Detail

Employees Only Content

The content in this document with a is only visible to employees who are logged in. Logon using your Lenovo ITcode and password via Lenovo single-signon (SSO).

The author of the document has determined that this content is classified as Lenovo Internal and should not be normally be made available to people who are not employees or contractors. This includes partners, customers, and competitors. The reasons may vary and you should reach out to the authors of the document for clarification, if needed. Be cautious about sharing this content with others as it may contain sensitive information.

Any visitor to the Lenovo Press web site who is not logged on will not be able to see this employee-only content. This content is excluded from search engine indexes and will not appear in any search results.

For all users, including logged-in employees, this employee-only content does not appear in the PDF version of this document.

This functionality is cookie based. The web site will normally remember your login state between browser sessions, however, if you clear cookies at the end of a session or work in an Incognito/Private browser window, then you will need to log in each time.

If you have any questions about this feature of the Lenovo Press web, please email David Watts at dwatts@lenovo.com.